The Spark that Lit the Hybrid Fire, Part II

The future of work moment, autopilot to copilot, AI vs IA, 10x you, and mindset over skillset

Last time we talked about using AI to tap into “unthinkable thoughts” and how cognitive revolutions have occurred throughout history. We used the lens of “extended mind theory” to better understand optimistic takes on the human AI hybrid and dismantled some headline fears around AI tools.

In Part II, we’re going to draw the thread from thoughts to actions—if we’re able to tap into new kinds of cognitive processes, how might this impact what we’re able to do?

“Civilization advances by extending the number of important operations which we can perform without thinking about them.” - Alfred North Whitehead (1960s)

“Consider a future device for individual use, which is a sort of mechanized private file and library. It needs a name, and to coin one at random, ‘memex’ will do. A memex is a device in which an individual stores all his books, records, and communications, and which is mechanized so that it may be consulted with exceeding speed and flexibility.”

These words were written by Vannevar Bush in his 1945 essay “As We May Think”, where he envisioned a device that would enable users to create and follow associative trails of information and then share them with other researchers. Bush’s vision of Memex inspired generations of technologists, including Douglas Engelbart who stumbled across Bush’s article that would inspire him to build the computer mouse and hypertext links that would later power the modern World Wide Web.

The full potential for a Memex—as a knowledge partner that “creates and follows trails of information”—has never truly been realized in its fullest form. After Engelbart, the PageRank algorithm Larry Page developed to improve internet search aimed to return mined human intelligence by using the crowd-sourced accumulation of human decisions about valuable information sources. It remains the default way most of us query information.

That is, until now.

The Future of Work Moment

“We are here today to talk about something that’s very fundamental to the human experience, the way we work. Most specifically, the way we work with computers. In fact, we’ve been on this continuous journey towards human-computer symbiosis for several decades. Starting with Vannevar Bush’s vision that he outlined in his seminal 1945 essay, As We May Think. Bush envisioned a futuristic device called Memex that would collect knowledge, make it easy for humans to retrieve that knowledge with exceeding speed and flexibility. It’s fascinating to see that someone postulated even back then so vividly what an intuitive relationship between humans and computing could be.

Since then, there have been several moments that have brought us closer to that vision. In 1968, Douglas Englebart, Mother of All Demos, showed the remarkable potential of graphical user interface, including things like multiple windows, pointing and clicking with the mouse, word processing that is full screen, hypertext, video conferencing to just name a few. Later, the team at Xerox PARC made computing personal and practical with Alto that ushered in the personal computing era. Then, of course, came the web, the browser, and then the iPhone. Each of these seminal moments has brought us one step closer to a more symbiotic relationship between humans and computing.

Today, we are at the start of a new era of computing and another step on this journey.”

Nadella goes on to explain that we’ve been on a journey with AI for years already—mostly through recommendation engines that inform what we watch, what we buy, and what we read—and that it’s become so second nature to us that we don’t often recognize it.

“You could say that we’ve been using AI on autopilot, and now this next generation of AI, we’re moving from autopilot to copilot… as we build this next generation of AI, we made a conscious design choice to put human agency both at a premium and at the center of the product… as empowering as it is powerful. ”

Microsoft’s demo of it’s Copilot capabilities was just one step in what’s been an evolving product partnership with OpenAI. Since Microsoft’s partnership with OpenAI and its recent injection of capital of $10B into the AI company just this past January, we’ve seen:

ChatGPT Plus - February 1st

Bing Chat - February 7th

ChatGPT + Whisper APIs (gpt-3.5) - March 1st

GPT-4 - March 14th

Microsoft 365 Copilot - March 16th

ChatGPT plugins - March 23rd

Other AI partnerships have since been announced, triggering a new era of development platforms. This includes a joining of forces between big and smaller players like Google and Replit, AWS and Hugging Face, and UiPath and Amelia. It goes reasonably uncontested, however, that the Microsoft + OpenAI duo leads the pack due to their joint ability to productize with speed.

If ChatGPT was the iPhone moment, Microsoft Copilot was the “future of work” moment. Just like ChatGPT illustrated “AI is here”, every organization is now grappling with a similar message—”the future of work is here”.

Autopilot to copilot

To properly understand the future of work moment, it’s worth parsing the “autopilot to copilot” positioning by Microsoft. Given its dominance in the work tech space, it's gone relatively unexamined compared to OpenAI's consumer plays, but is equally significant in examining AI’s impact on knowledge work and intellectual life.

The demo itself raised a few observations:

Today’s productization of AI is breaking down old paradigms and dichotomies, such as automation vs augmentation.

Ecosystem tools are poised to win in this “new era of computing” due to their integration of data across various tools (take info from my brief, turn it into a PPT, summarize my video call, etc.)

Their focus on productivity, not creativity, was intentional and will resonate with C-level executives focused on cost cutting in "The Age of Efficiency" leading to rapid adoption.

While both Open AI and Microsoft have been careful in how they’ve developed and released new AI capabilities focusing on human + AI compatibility, the speed is unprecedented—even for tech. So much so that, just a few weeks following the stream of announcements, thousands of AI experts, technologists and researchers signed on to the Future of Life Institute’s open letter calling for a pause on “giant AI experiments”.

At the time of this writing, the letter has over 25,000 vetted signatures, including high profile names like Elon Musk, Steve Wozniak, Yuval Noah Hurari, Andrew Yang, Yoshua Bengio, and more. For so many high profile names, the letter is ambitiously abstract, surprisingly short, and relatively unfocused, citing concerns from existential risk to misinformation to job impact. There are many reasonable arguments both for and against a “pause” (I won’t go through them here), but what the letter echoed, and continues to be regurgitated by our “journalists” and headlines, was a familiar fear known as automation anxiety which was as unsurprising as it was ill-formed:

“Contemporary AI systems are now becoming human-competitive at general tasks, and we must ask ourselves… Should we automate away all the jobs, including the fulfilling ones? Should we develop nonhuman minds that might eventually outnumber, outsmart, obsolete and replace us?”

We’ve debated these questions for centuries and have yet to see the complete redundancy of humanity in one fell swoop. “But could this time be different?” Well, if we're going to shift our attention to drivers of differences in a trend, let's also consider another—we have more data and experience than we’ve ever had to understand how innovation, growth, and technological changes have occurred over time. At the same time, the same powerful technology we’re reacting to can also help us problem solve in response. We also know that these forces go hand in hand. We increase the wealth of an economy by making more people more productive. We didn’t know what jobs would be created with the decline of the agricultural world. We didn’t know what jobs would be created with the invention of the computer. Social media influencers, crossfit and pilates instructors, areas of engineering from devops to prompt engineers, and 100s more occupations would have been unimaginable just a few decades ago.

So, could this time be different? Yes AND we can continue trying to predict the unimaginable OR focus our time asking better questions.

This post dives into a few:

What does it really mean to automate work vs augmenting? Are these complimentary or substitution effects? Do these dichotomies even hold true with current AI?

AI is out of the lab, so how should the average worker respond? What is the best way to think about AI products so we might be able to adapt?

As we discussed last time, today's AI is close to magic in its fluency in human language. It enables us to think broader, better, and faster as a general purpose tool by “speaking our language” enabling a tighter feedback loop between mind and machine. As this piece will argue, this is cause for excitement, not anxiety, if approached in the right way. Early research on both the productivity and creativity impacts of these tools demonstrate the same.

But rather than offer you blind optimism, we’ll dig deeper. The primary motivation for this blog’s name and focus, Atomized, is two-fold: 1) to better understand a thing by better understanding the “atomic unit” through a first principles lens and 2) directing that understanding towards new permutations and recombination of that atomic unit, rather than lament a legacy structure. And, the primary motivation for this piece is to question some fundamental fears and assumptions on AI’s impact on work, starting with a questioning of tech’s oldest dichotomy.

Tech’s Two Camps: AI vs IA

Does ChatGPT automate or augment your work?

The intellectual giants of computation like Bush, Engelbart, and others traditionally fell into one of these two camps: those developing tools to automate humans and those designed to augment.

This is more than a semantic distinction. In his book, Machines of Loving Grace, John Markoff argues that the core tension at the heart of computer science was motivated by two such researchers, John McCarthy and Douglas Engelbart, which eventually birthed two philosophical camps in AI research. McCarthy set out to build a working “artificial intelligence” (AI) that could simulate and replace human capabilities, while Engelbart built intelligence augmentation (IA).

But there is a paradox that even Markoff admits to in his book predicated on the dichotomy—that the same technologies that extend our abilities can also displace us. The paradox points to a choice that diminishes the power the dichotomy has in the world today: AI and IA are not opposed, but partners.

First, AI and IA need to be brought together to be effective in the world and that is exactly the goal of today’s product builders. Second, an “extension” vs “displacement” dichotomy does not account for the adaptability and malleability of the human mind—and the entire human history of ourselves as tool users. Displacement is not an end point, but a short term event along a wider time horizon. Third, once we can view beyond the dichotomy, there is likely a better framework we can use emerging as a synthesis of the two—artificial intelligence augmentation (AIA): the use of AI systems to extend what we’re able to think and do, creating one of the most powerful feedback loops we’ve ever seen:

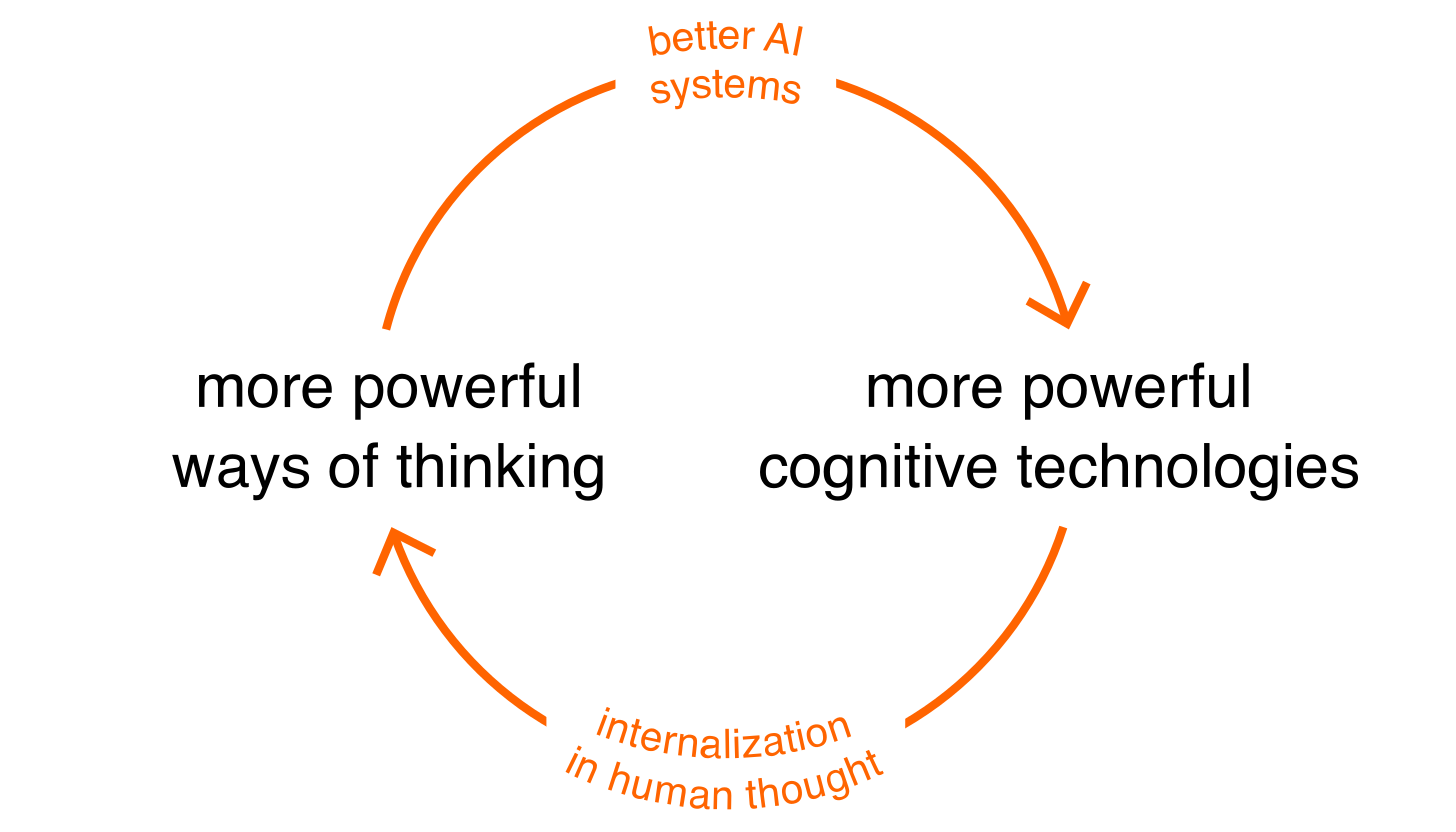

From a 2017 paper on the topic:

“AIs actually change humanity, helping us invent new cognitive technologies, which expand the range of human thought. Perhaps one day those cognitive technologies will, in turn, speed up the development of AI, in a virtuous feedback cycle.”

Tech analyst, Ben Thompson, recently made a similar point in passing when commenting on the Microsoft demo (emphasis my own):

“All of the demos throughout the presentation reinforced this point: the copilots were there to help, not to do — even if they were in fact doing a whole bunch of the work. Still, I think the framing was effective: it made it very clear why these copilots would be beneficial, demonstrated that Microsoft’s implementation would be additive not distracting, and, critically, gave Microsoft an opening to emphasize the necessity of reviewing and editing. In fact, one of the most clever demos was Microsoft showing the AI making a mistake and the person doing the demo catching and fixing the mistake while reviewing the work.”

And in 2018 he touched on a similar point distinguishing between Google (automation-focused) and Microsoft (augmentation-focused) in which he called it “Tech’s Two Philosophies”:

“This is technology’s second philosophy, and it is orthogonal to the other: the expectation is not that the computer does your work for you, but rather that the computer enables you to do your work better and more efficiently. And, with this philosophy, comes a different take on responsibility… That there are two philosophies does not necessarily mean that one is right and one is wrong: the reality is we need both.”

Here’s where I agree more with Thompson than I do Markoff. Augmentation isn’t inherently better because it’s not displacing humans. In reality, both usually happen depending on your timeline, and we actually need both for enhanced productivity (again, which is the primary channel of economic growth).

I’d also argue that this “next era of computing” is distinct in one crucial way: we are going from “software eats the world” to “AI eats software” and perhaps “AI eats the structure of everything” (I’ll expand in a later piece). AI is becoming more and more general purpose, giving rise to a new paradigm of design through AI augmentation, making displacement vs extension effects murkier than ever.

This becomes infinitely more clear when you get into the granularity of most knowledge workflows. Most knowledge tasks like writing articles, designing user interfaces, drafting business plans, creating marketing copy, and forecasting financials are all composed of adapting approaches of a solved problem to a slightly new context while focusing innovation at one or two vectors in a problem space. It is those one or two skill vectors within a given workflow that most workers have a comparative advantage. While AI might automate tasks as they are done today, it will augment the person to either double down in their comparative advantage OR extend themselves in other tasks and skills not possible before.

As an example, VC investor and co-host on the All In Podcast, David Sacks, recently outlined how his use of ChatGPT has enabled him to extend more of his SaaS expertise through writing in less time. Writing is not his comparative advantage—his SaaS expertise as an operator and investor is. ChatGPT allows him to extend this expertise to more people by offloading parts of the writing tasks to ChatGPT.

A breakdown of workflow changes like this might look like:

inject specialized or novel cross-functional expertise into prompt to GPT-tool

assess first draft or output from GPT-tool

iterate a few times, conduct edits, embed more context

ask for a re-write in a different style, for a different audience, shorter or longer

assess final output; ask GPT-tool for feedback or critiques

use GPT-tool to generate 10 other formats of the final output (deck, tweets, summaries, etc.)

A workflow like this allows *anyone* to spend more time wherever their comparative advantage might in whatever ways they want to spend that time. This has two obvious effects among others:

There is more opportunity than ever for people with novel expertise to double down in producing more value with less

There will be more competition than ever making it increasingly hard for those that don’t lean into the new world of work to succeed in their domain

Cognitive Assistance

I am personally very excited about what work looks like in the age of “artificial intelligence augmentation". But I also realize that many are not. I believe some of this is due to a few core misconceptions downstream of a false AI vs IA dichotomy.

False narratives of AI:

AI → systems with agency and will

AI → 1 to 1 human replacement

AI → reduction of human agency

To the first narrative, Francois Chollet, author of the Keras deep learning library, outlines thinking that is helpful here. He argues that the popular imagination centers on AI as creating artificial minds as agents with wills of their own, but that this is not inherent to AI itself (and definitely not of the AI we will have for the immediate future).

He outlines three kinds of AI that are possible:

Cognitive Automation

Cognitive Assistance

Cognitive Autonomy

Cognitive automation is similar to the “cognitive sourcing” we're already familiar with in our digital tools. He describes it as the “encoding of human abstractions in software” and then using that software to automate tasks. Nearly all common AI today falls in this category. Cognitive assistance is where I think cognitive revolutions happen—it is using AI (or other cognitive technology) to *help us* make sense of the world, forge meaningful connections, and make better decisions. Chollet uses the terms “extension of your own mind” relating back to the extended mind view I argued for throughout my first piece in this series. Importantly, he argues that assistance is not a different kind of technology, but a different kind of application of the same technology. In other words, the application of AI through a IA lens that considers the interface in how it's deployed. Cognitive autonomy, by comparison, is the creation of artificial minds that could thrive independently of us, existing for their own sake. This is the driver of automation anxiety that propels so much fear, yet is largely the stuff of science fiction for the foreseeable future (yes, even in the world of gpt-5/6/7 and internet plug-ins).

AI → systems with agency and will?

Correction #1: AI comes in a variety of forms—the current leap with gen AI is being deployed as cognitive assistance, not cognitive autonomy

Secondly, when it comes to jobs, our new cognitive assistants will likely lead to job displacement, but it is unlikely to see full categories of jobs replaced immediately. The reasons for this are quite simple—the economy is a dynamic system, human desires and needs are infinite, and there is much more to labour markets than pure efficiency (transition costs, human preferences, social group dynamics, etc.). Industrialization gave us mass-produced goods AND a market for artisan, “local”, “green” and other types of goods in response. I suspect that AI-generated graphics, art, memes, and other online assets will displace some artists AND create whole new markets for others. There will be struggles and tensions in figuring this out, but the ultimate race for workers is not one of human vs AI—it will increasingly be one of human vs human + AI.

AI → 1 to 1 human replacement?

Correction #2: Our new cognitive assistants gives superpowers to those that embrace them—increasing the competition between humans vs humans + AI (not human vs AI)

Lastly, automation anxiety, while psychologically understandable, often relies on an exaggerated discounting of agency. “Job loss” is to be feared in a world where your output is fixed—a world in which what you can do in your domain with your skills using existing technology cannot change. In our world, humans are adaptive by nature, find meaning in growth, and possess both creative and curious qualities that enable cognitive extension in the first place. At the same time, tool builders have inherent incentives to increasingly make their AI products more user friendly, cheaper, and more effective, giving more and more people access to extremely complex cognitive agents. People that struggle to communicate, learn a language, or navigate complex UI can now access tools that take all of these tasks easier. People that have wanted to write blogs or books but never had the time can now co-create with an AI assistant. People that have wanted to tap into technical subjects like coding or data science can learn and build faster than ever.

AI → reduction of human agency?

Correction #3: Those who develop new superpowers are likely to cultivate enhanced agency, not less. These superpowers are not reserved for the few, but for the many, given how accessible these tools now are (and the incentives the builders have).

10x You

I think the key question open to workers and organizations right now comes back to comparative advantage: how might you embrace AI assistance on two axes for human + AI superpowers:

Intensifying your domain expertise (experience, knowledge, skills, expertise, productivity)

Expanding into other domains previously out of reach (interests, curiosities, methods, audiences, curiosities)

There is a common concept in the tech sphere known as the “10x engineer”. What became hype for VCs and a meme for everyone else, originated through a 1968 series of studies presented in a paper entitled, “Exploratory Experimental Studies Comparing Online and Offline Programming Performance” that reported a ten-fold difference in productivity and performance between the best and worst programmers.

The 10x engineer is experiencing a bit of a renaissance today with the creation of GPT-powered tools like Github Copilot and Replit that act as coding assistants, supercharging developers’ productivity. In fact, Amjad Masad, Founder of Replit, is bullish on the case for the 1000x developer saying citing examples of his platform servicing app requirements in under 30 minutes. The newest wave (as of one week ago) of GPTs are now turning prompts into actions, known as AutoGPTs—autonomous agents that can prompt themselves and use the internet to accomplish tasks. From what I understand, there are still lots of kinks to work out, but in 3-6 months time it’s pretty feasible to think that we’ll have an early adopter ecosystem of personal agents helping us not just think better, but accomplish way more.

Knowledge work obviously goes far beyond coding. This creates an opportunity space for anyone to retrofit a hyper-competitive “10x you”.

Design your Comparative Advantage

Given how fast the space is moving (I had to rewrite this piece several times to keep up), I’d argue *now* is the best time to dive in and rethink your comparative advantage if you’re interested in being a top performer in the future.

Unlike some of the papers cited here, I don’t have this down to an exact science, and much of this might change, but I am constantly asking myself the following questions, coming down to developing both depth and range:

What are the 2-3 primary things (skills, domains, experiences) I want to dive extremely deep in and be known for?

What am I consistently driven by and attracted to?

What am I consistently questioning or frustrated by?

How can AI tools supercharge that focus?

What are the 3-5 complimentary things (skills, domains, experiences) I want to expand into that people like me don’t normally do or know about?

What novel interests or quirks do I already have?

What do I love doing in my off time that I can integrate pieces of?

How can AI tools accelerate that range?

Once you have some initial answers to those questions, here’s how I think about the different ways I’ve already been successful in going deep and wide in my domain with a tool like ChatGPT:

Explore

Explore more latent spaces and connections between things → make new connections

Explore new personas or perspectives → engage in dialogues

Stress test intuitions, insights, or hunches with low judgment → create feedback loops

Expand

Expand range on a particular topic → make wider connections

Expand into parallel skill or competency spaces → branch into tech/non-tech

Enhance social skills → practice influence, negotiation, etc.

Intensify

Deepen existing expertise → drill down

Challenge existing viewpoints with feedback/critiques → engage the other side

Do more of what you’re great at → do more

Outsource

Speeding up tedious work through outsourcing → delegate research

Outsourcing work I don’t want to be doing at low cost → automate proof-reading

Outsourcing incremental steps to produce higher value → brainstorm ideas

Fortunately (or unfortunately, depending on how you look at it), I think a lot of how one leverages gen AI to create comparative advantage is up to the individual worker. Some might find that overwhelming, but I don’t think it needs to be. ChatGPT’’s success as one of the highest, fastest adopted consumer tools demonstrates the usability and ease with which average people can make use of it—now millions of people have access to generalizable cognitive assistance for free now being supercharged by the fastest developer ecosystem to emerge since mobile applications.

Skillsets vs Mindsets

Lastly, I think one of the biggest shifts workers will have to go through will be one that requires a transition of mindsets, not skillsets. If your comparative advantage was coding, data, finance, business or legal analysis that set of barriers to entry was just demolished (regulation will hold some up!). The comparative value is now shifting from knowing the detailed intricacies of rules-based systems (skills) to exploring novel and unique ways to build a 10x you (mindset). This is the pinnacle of having a growth vs fixed mindset.

And they key to unlocking it all—as this series has emphasized—is: language. How you think, how you work, and how you frame beliefs. Language is the spark.

By adopting a lens of competitive advantage alongside growth mindset, today’s average worker can start building their own “Personal Monopoly”. While David Perell coined the term relating it to the goal of writing online, we can extend the concept to the value every knowledge worker is able to now create with new tools:

“The ultimate goal of writing online is to build a Personal Monopoly. It’s your unique intersection of skills, interests, and personality traits where you can be known as the best thinker on a topic… Forge a distinct path instead of copying what everybody else is doing. Work on ambitious projects, study the unexplored intersections of ideas and find the questions that people are asking but nobody is answering. There is a vast intellectual frontier waiting for you to find it.”

Just as the internet increases variance, it rewards differentiation. Due to the immense scale of the internet, the audience for almost any topic, service, or product numbers in at least the thousands. Just like agricultural tools reinvented the ways we were able to grow, cultivate, and distribute food, giving us everything from Costco mass distribution and $1.50 hot dogs to free run eggs and farmer’s market strawberries, we’re likely to see even more differentiation in knowledge work, but with less constraints (software trends to zero marginal costs). This means more competition, but it need not be plagued with high cognitive friction and steep learning curves. The most promising case for the average worker is rethinking what one can do with lower barriers to entry and more opportunity for differentiated value creation.

But—only “as we may think”.

Somewhere Vannevar Bush is smiling.