The Human-AI Hybrid Wins

The future of work with generative AI, imaginative superpowers, human-AI hybrid design, and expanding creative frontiers

“The Analytical Engine has no pretensions whatsoever to originate anything. It can do whatever we know how to order it to perform.” —Ada Lovelace (1850s)

“Those who can imagine anything, can create the impossible.” —Alan Turing (1950s)

In his 1994 award winning science fiction novel, Permutation City, Greg Egan explores many concepts ahead of his time. Among them is the “Autoverse”, an artificial life simulator based on a cellular automaton, complex enough to represent a kind of artificial chemistry. This artificial chemistry contains simulated building blocks of artificial life—deterministic, internally consistent, and follows different rules.

Unlike the recently popularized “Metaverse”, it is not an immersive environment for humans. It is a kind of game environment in which simulations can be run with different kinds of organisms with different prompts. The Autoverse is fairly primitive, composed of tiny environments simulated with populations of simple life forms called Autobacterium lamberti which are maintained by a community of enthusiasts obsessed with evolving more complex “life”. In Egan’s world, human brains can also be copied and exist in other VR environments after a process of scanning, representing digital renderings of human brains with complete subjective consciousness.

The Autoverse and “human copies” come together when the main character, Paul Durham, hires an Autoverse enthusiast, Maria Deluca, to design an Autoverse program to generate expansive, evolvable life while commissioning a famous virtual reality architect to build a full scale VR city.

In other words, the Autoverse meets the Metaverse. Simulated life meets simulated worlds. As a sort of simulated “big bang”, this redefinition of what it means to live and create as a human, and thus humanity itself, through simulation is at the heart of Permutation City. If human brains can be copied, alternative worlds simulated, and computational power endlessly exploited, what does the next frontier of human creativity look like?

In Egan’s style, it’s an expansive question ahead of its time but it raises a more pertinent one for today’s world: with creativity increasingly democratized, what does the future of work look like? Or more starkly, “how intelligence limited have we been?”

Creative new worlds

Until now, AI has been mostly effective at generating recommendations or analyzing things for us—routine cognitive labour. AI has also been applied in routine physical labour through robotic systems both automating and augmenting segments of manufacturing work in factories.

But as of late 2022, generative AI (gen AI) is now stealing the show. As a sort of 21st century Autoverse, AI systems are producing art, helping us write better, creating videos, assisting scientific discoveries, helping us map the brain, appearing in Congress, generating architectural designs, coding, and producing graphic designs. These tasks are mostly found in knowledge and creative work, comprising billions of workers, most of which we’d consider having “specialized skills” and in-demand expertise.

Taken to its logical conclusion, gen AI has the potential to disrupt the most advanced knowledge work, meaning those workers unprepared in 2-5 years will likely be at a competitive disadvantage. In contrast, those that embrace these new AI tools and the leverage they bring are poised to embrace a creative new world.

So, what is gen AI and where did it come from? Simply, generative AI is a brand of artificial intelligence that generates output using models of the world through multiple mediums (text, audio, video, visual, etc.). This output is often used in the creation of content—text, images, sounds, videos—that are often indistinguishable from “original” ones made by humans.

GPT-3 (General Pre-trained Network - #n) is probably the most recognizable name when it comes to gen AI, launched by OpenAI. This model has been trained over the years using large blocks of text gathered from Wikipedia and across the internet. It is also one of the most revolutionary in terms of its impact for two primary reasons: 1) it is widely accessible through an experimental playground and API and 2) is more of a general purpose algorithm that can be used in embedding, fine tuning, etc. to power a whole host of applications. In short, it dramatically lowers barriers to entry for developing an AI application that can also be fine tuned for a wide variety of use cases.

Other tech titans, like Amazon, IBM, Github (Microsoft), and Google have their own models, but are more specialized like AWS’s Polly, Github’s CodePilot, and Amazon’s DeepComposer. The explosion of startups in this space is more of a recent phenomenon due to the launch of OpenAI’s API in 2020 and more recently its removal of its waitlist in 2021.

A brief timeline view of Open AI milestones is also helpful in understanding the current boom in gen AI:

2015: OpenAI formally introduced to the world

2019: OpenAI Five beats Dota 2 world champions

2019: Microsoft partnership announced

2019: Solving rubik’s cube with robot hand

2019: GPT-2 1.5B release

2020: OpenAI API announced - invite only (GPT-3)

2021: DALL-E announced

2021: OpenAI Codex announced

Late 2021: OpenA API - no waitlist (GPT-3)***

2022: DALL-E 2 announced (we are here)

While most of the spotlight was on crypto, NFTs, and web3, 2021 (***) was an important year for AI’s productization at scale, one of the most exciting times for AI since 2012 when deep learning really took off. Not only are we seeing how gen AI applications can democratize creativity (consumption of AI), but API access, playground environments, and new open-source projects also democratizes powerful problem solving capabilities to the builders (productization of AI).

Beyond the fanciful

At the time of this writing, Stability AI, the company behind a popular text-to-image AI program called Stable Diffusion, recently announced a “seed” raise of $101 million at a $1 billion valuation and serves as critical validation to the company’s decentralized approach to AI development that lies in stark contrast to big incumbents. While Stable Diffusion is just one of recent examples of text-to-image AI with others including OpenAI’s DALL-E, Google’s Imagen, and Midjourney, anyone can build on Stability AI’s code or use it to power their own commercial offerings. GPT-3 and Stable Diffusion are similar in that they serve as early examples of more accessible AND generalizable tools to power AI applications.

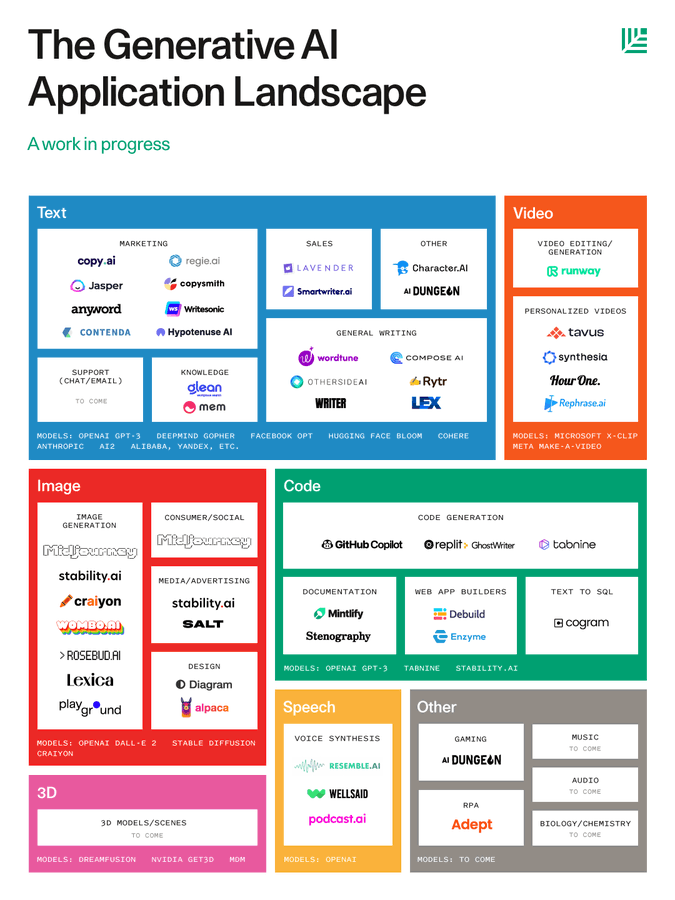

And investment in these applications are ramping up. Less than a week ago, Wired magazine headlined a piece titled “AI’s New Creative Streak Sparks a Silicon Valley Gold Rush” citing prominent investor, Sarah Guo’s new $101 million fund focused on this stream of AI launched from her new firm, Conviction. Just a few weeks ago, Jasper AI, a popular “AI content” developer, announced a $125 million investment at a whopping $1.5 billion valuation as its first round of fundraising to build a platform “that could help businesses and professional creators brainstorm and do their work more quickly and efficiently.” Other popular apps in the space include Regie.ai and Copy.AI, business content apps, Writer, AI-writing for teams, Glean, AI-powered search for workplaces, and most notable (imo) Replit, democratizing code-writing.

While investment in the space takes off, online virality has too. Sonya Huang, partner at Sequoia, published one of the first Gen AI Market Maps on Twitter in a pretty detailed thread citing excitement in the fast moving space. As reported by a Protocol piece with her, recent moves like the ones above “solidifies the space as a sector and not just a handful of companies making fanciful images.”

In the interview with Sonya, she cites the “superpowers” that humans can gain by working with machines and believes that the gen AI hype is “absolutely justified.” She goes on to explain that she’s starting to see a shift between seeing gen AI as just fun models and how they might actually “drive the future of how we work and then next big application companies.”

I tend to agree. We’re in dark times—inflation, recession, and global conflict—but gen AI might be the exact silver lining we need to spur the next stages of startups, the creator economy, and a solopreneur class we saw take shape during the pandemic.

Better together: the human-AI hybrid

In wrapping up her interview, Sonya admits that she actually had a hard time at first understanding possible applications for gen AI because she’s “not the most creative person”. She decided to test out some of her early thinking on the space with GPT-3 itself. The result was a blog post co-written by GPT-3 called “Generative AI: A Creative New World”.

“The dream is that generative AI brings the marginal cost of creation and knowledge work down towards zero, generating vast labor productivity and economic value—and commensurate market cap.” - Sonya Huang, Pat Grady, and GPT-3 (via Sequoia)

At the bottom of the post, there is a disclaimer citing that it was co-written with GPT-3, bit it did not “spit out the entire article, but was responsible for combating writer’s block, generating entire sentences and paragraphs of text, and brainstorming different use cases… as a nice taste of the human-computer co-creation interactions that may for the new normal.” The post was both written with and features artwork informed by gen AI showcasing just one example of the power of the hybrid-AI human: a new era of human and machine working together in cognitive tasks.

I’m excited by this. But I also know human behaviour is tricky to anticipate and attitudes towards new machine teammates are not always the most inviting. Is the world ready to transition into a human-computer co-creation “new normal”? The promise of a Creative New World should be exciting, but we will need to grapple with how we invite our new co-creators as friends, rather than facing off as foes.

Confronting techno-pessimism

Somewhat understandably, large segments of today’s society have grown tired with overly tech-infused narratives. Perhaps due to tiring 90s cyberpunk dystopianism, heightened political division on social media, and deteriorating mental health plaguing today’s youth, people are more skeptical of technology than they were ten or twenty years ago.

The techno-pessimism goes beyond just clickbait headlines and polarizing “misinformation”–people just do view technology more negatively than they used to, especially when it comes to their working lives. When surveyed about automation and AI, more than 80% of respondents surveyed by Pew Research in 2019 see automation doing most work in society in the next 30 years, with nearly 70% of them saying this will be a negative or bad thing. 76% of them think that inequality between the rich and poor would increase if this were to happen and nearly 70% say it's unlikely that widespread automation will create many new, better paying jobs for humans.

Pew also found, however, that while 80% of people see automation/AI doing most work by 2050, only 37% of employed adults say robots or computers will do the type of work that they do over this period. Other studies show that most white collar workers feel relatively confident that their skills will not become outdated due to automation/AI.

This tendency towards optimism bias—the inclination to believe that you are less likely to experience a negative event than other people—is a natural trait of human psychology. Put together though, not only are people more likely to view technology negatively, they are also less likely to anticipate its developments impacting their work or skills (and thus, prepare for it).

Getting workers to “win”

This piece evolved in many ways as I was writing in response to the following headline: “In the Battle with Robots, Human Workers are Winning.”

As I read, I actually agreed with most of the opening arguments in the piece. And to the author’s credit, the piece is a lot more nuanced than the headline purports. The author builds his arguments on what I call the “the future of work is human” (FoWH) narrative which basically says:

AI hype told us we were all going to be unemployed

But humans still have jobs (and actually, there’s a labour shortage—ha!)

Even in completely automatable jobs, humans still have jobs

Humans, therefore, have been underestimated

AI is likely to improve but there’s no evidence it will happen quickly

With most of this, I actually agree.

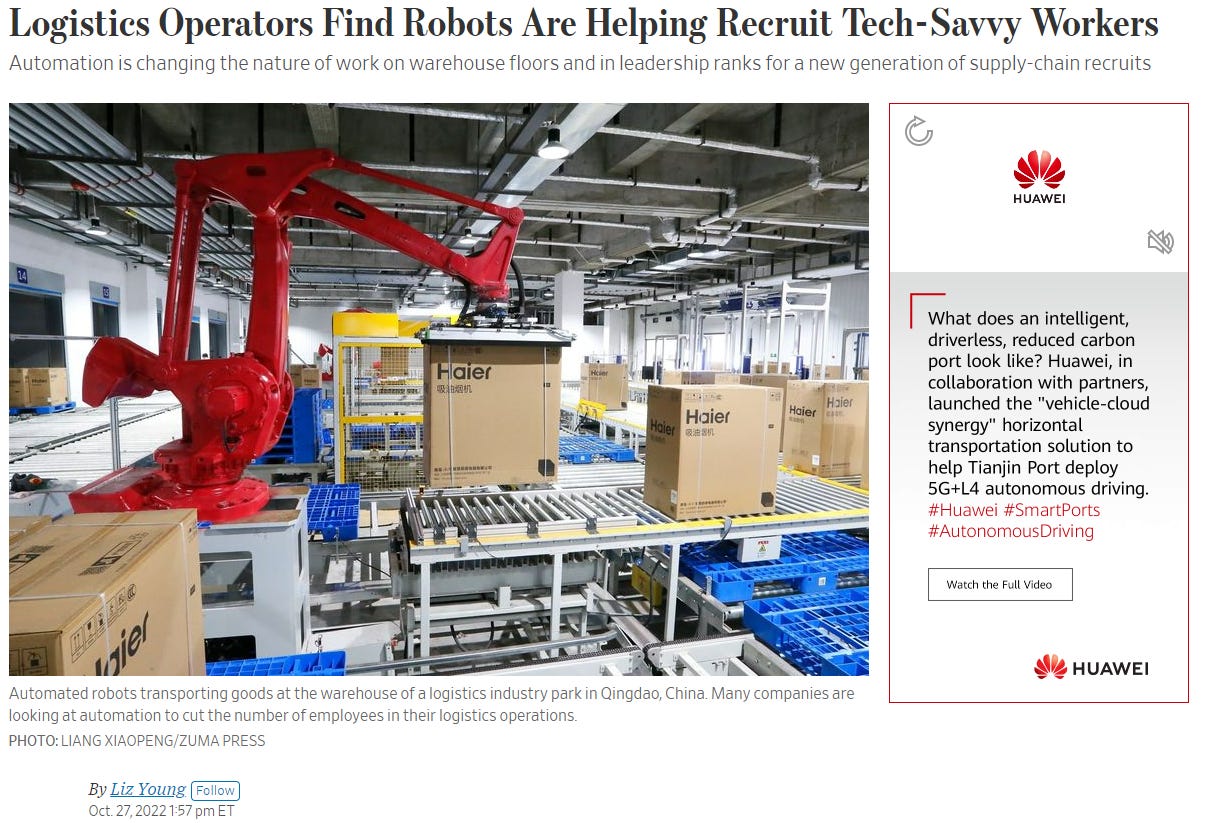

But, like many who now hold this view, the position and its conclusion is misleading—there is a lot of evidence AI capabilities, commercialization, and adoption is ramping up. As I write this, the Wall Street Journal has just reported signs of manufacturers and logistic operators turning to automation to both solve the qualms of the supply chain issues and attract younger, more tech-savvy talent. The recent rise in gen AI interest shows us that AI and automation doesn’t have to be perfect before it's useful—it just has to make economic sense and bring value to customers through an interface layer that is likely to be adopted.

Again, I do think the humanized focus is directionally correct—humans have been underestimated in their capabilities when cast against algorithms and machines. But in its extreme form, the narrative leads to a glorified “human-purism” we expect to persist in perpetuity resulting in a misaligned “humans vs machines’ dichotomy.

This is not only wrong, but dangerously ill-positioned at a time when AI might actually be the thing we need the most.

Here’s why.

#1: “Future of work is human” as an overcorrection from the 2010 job apocalypse craze

First, the FoWH narrative is an almost complete reversal from the AI job apocalypse just a decade ago.

Throughout the 2010s, articles like “Will Robots Steal Your Job?” dotted popular headlines stoking fear of robotic-induced jobs apocalypse. They were largely invoked by an infamous Oxford study published in 2013 claiming that 47% of jobs were “highly susceptible to automation” by the year 2030. Other authors, like Brynjolfsson and McAfee, also sparked similar debate through books like The Second Machine Age. These authors argued that if the first machine age was about mechanizing physical tasks in factories, the second machine age will be about automating mental ones through digitization. The consulting world jumped on the “AI is coming for jobs” bandwagon and spit out their own calculations, one of which included a 2017 McKinsey study estimating 400-800 million workers potentially displaced by 2030. “The Global Skills Gap” and the “Reskilling Revolution” became the new big problems to solve. Stepping in to save the world as usual, Bill Gates suggested a tax on robots to prevent this from happening and other tech figures and presidential candidates advocated for UBI as their preferred solution.

In retrospect, the fact that these fears appeared in the 2010s is understandable. The financial crash had just occurred in 2008 and depressed employment followed. Income inequality was top of mind as middle and working class wages continued to stagnate. As an undergraduate studying the topic during this time, I remember being buried in papers looking at the declining labour share of income and its interaction with technology as a primary driver. Of course, blaming the machines for misfortunes during periods of social unrest is not new. The 19th century Luddite struggle and smashing of machines comparison here makes sense—but not in the way you might think. As I wrote in 2017 observing these attitudes at the time:

“Despite their modern reputation, the Luddite protests against the introduction of machines in new factory systems in the early 19th century wasn’t about machines taking their jobs. Most protesters were skilled machine operators, and the technology they destroyed wasn’t particularly new either. Rather, it was a culmination of unfortunate political, economic, and technological factors that ran parallel to each other — widespread unemployment, economic upheaval, and war against Napolean’s France. On March 11, 1811, growing frustrations with the system proliferated into a crowd of protesters demanding more work and better wages, smashing machines, and spreading throughout Nottingham, a textile manufacturing center. But the most common machine they attacked was the stocking frame, a 200-year-old knitting machine. The spark that fueled their fire wasn’t the introduction of the machine, itself.”

View in this light, the 2010s era of automation anxiety can be better understood, as well as the time that now follows it– FoWH movement:

Could the Future of Work be more human? (Forbes)

The Path to prosperity: Why the future of work is human (Deloitte)

The future of work is uniquely human (MIT Technology Review)

The future of work is human (TechCrunch)

The Working Future: More Human, Not Less (Bain & Company)

Success in the Future of Work: What it means to be human (Visier)

#2: “Future of work is human” leads to a misrepresentative design approach

Secondly, this narrative has implications for how we design our technology, process and systems. There has not only been a stark shift in the narrative of work over the last 1-2 years, but it has been closely mirrored through more “human” approaches in design and development spaces. A design approach known as “human-centered design” is now a common design practice, intended to promote the perspectives of real users at the center of the development process keeping their wants, pain points, and preferences front of mind during every phase.

The approach is almost unquestionably hailed as the gold standard when it comes to deploying new technology in workplaces and thinking about talent strategies at large. From talent acquisition to layoffs to L&D to hiring decisions, as organizations have ramped up “digital transformation” efforts, they’ve increasingly swung from a tech-focused approach to human-centered design—the idea that any change or technology should empower the individual and be as accessible to as many people as possible.

I am completely supportive of this view. There is value in recognizing individual agency, needs, and pain points when introducing new technology into an organizational process or workflow. But the user and technology share an important and evolving relationship that becomes increasingly important as tools are embedded in new workflows. Taken to its extreme, a user-centric approach can become a user-only one–creating false dichotomies, unnecessary tradeoffs, and misplaced pessimism when technology is placed antagonistically against humans.

Similarly, as AI models become more sophisticated and distributed, human input into their model development will become increasingly important for goal alignment. For example, OpenAI’s GPT-3 now utilizes an update to its large language model, known as InstructGPT. To improve GPT-3, they utilized a method known as reinforcement learning from human feedback (RLHF) which uses human preferences as a reward signal to fine-tune the models.

RLHF is just one more step in the ever evolving human-machine work and feedback loop that started with humans…

building the internet which

generated initial human data

captured by machines for an algorithm

constructed by human researchers

trained on machines

now being fine tuned by humans

being extended by machines

The future of this process will continue to be a design and development process initiated between human minds and machines, both of which are instrumental. And their emergent behaviour, beautiful.

So as software evolves, so must its design practice. In its place, perhaps we might see new approaches as generative tools become more accessible—a new “human-AI hybrid design” approaches as it works alongside its human counterparts.

#3: “The future of work is human” is just plain wrong—we need AI to “win”

Thirdly (and relatedly), we actually need both AI and automation much more than we want to admit. A human-AI hybrid design” is not just a nice idea, it’s something we might urgently need. The ultimate irony of the FoWH movement is that we no longer have the number of humans we need due to an aging population and future demographic collapse. For all the talk about the age of abundance, one thing we will not have in abundant supply is humans.

There are a few different drivers here, all of which might peak in parallel, making this an even more dire concern.

For one, demographic factors—in most developed countries, both productivity and growth in the labour force has declined, largely defined by aging populations. Growth in the working age population has already peaked and families continue to have less kids. In his book, The End of the World Is Just the Beginning, geopolitical analyst, Peter Zeihan, dives deep into these trends claiming that by 2020, birth rates had been so low for so long that even the countries with younger age structures are already running low on young age adults. Birth rates will not simply continue a decline, but will collapse. The implications of this are just beginning to be understood.

“By 2020, we're going to cross over, and for the first time in history there are going to be more people who are over sixty-five years alive in the world than there are people under five.” – John Markoff via The Edge

We are already witnessing the early signs of this as increasing labour shortages. In the US, there are over 10 million job openings, as it also continues to see lower labour force participation as workers retire. In Canada, job vacancies reached a peak in May 2022 at over one million vacancies. Industries like manufacturing, tourism, and hospitality are experiencing this more than others, as younger work preferences shift and skilled employees retire. Late September 2022, the Canadian Manufacturers and Exporters (CME) wrote to the federal government calling for a plan to reduce severe labour shortages (mostly skilled trades) and stubbornly high inflation numbers. While inflation has started its slow descent, labour shortages persist—42% of Canada's manufacturers (10% of Canadian GDP) have either paid penalties or lost work because of labour shortages.

Countries around the globe face similar pressures. China’s labour force as the “workshop of the world” alone is expected to lose 1 million workers each year for the foreseeable future. Its aging population is rising more than twice as fast as the U.S. Meanwhile, policy interventions like baby bonuses have only minimal effect, and it can take years of training to get people into the jobs and skills most needed.

Second, globalization is in retreat. A trend accelerated by the pandemic, the “global order” shows signs of crumbling due to supply chain disruptions, Chinese industrial policy slip ups, price shocks, and wars. There are positive implications of “reshoring”, but the transition period is likely to be messy. Recent geopolitical tensions between the US and China have only accelerated, for one. In an ongoing trade war between the two global powers, the Biden Administration has not only restricted US chips from China but also important manufacturing inputs they use to make their own. Trade is not only being more restricted, but reshoring activity has notably increased. Earlier this year, Bloomberg reported a 116% increase in the construction of new manufacturing facilities in the US, citing massive chip factories going up in Phoenix, aluminum and steel plants springing up in the south, and even the reopening of previously shuttered facilities.

The resulting state of affairs is one that necessitates more automation, not less.

For manufacturing specifically, it renders a picture of an aging manufacturing base at a time when youth are 3x more likely to look up to Youtubers and influencers than they are astronauts, firemen, and trades as career options. Of course young people change career preferences often as they grow up and enter the workforce. But when I and a few other analysts dug into the data from PISA assessments for a 2020 OECD study on youth career ambitions—at a time they are making higher-ed decisions for their career—we found similar trends: that the top career picks for high schoolers were largely influenced by what they were most exposed to. Other efforts to better understand the disinterest in manufacturing specifically find the same thing:

“Manufacturing never interested me, partly because I was never exposed to it. No one in my extended family worked in manufacturing, and it was never strongly encouraged or explained in my grade school, despite living in the Rust Belt.” - student response via Industry Week

This brings us back to the central point–narratives of work need to be ones we are both thoughtful and intentional around. Just like the reduction of the manufacturing class to one as “robotic” or outdated can lead to dehumanizing an essential sector of our economy, when we pit humans against the machines and claim we’ve “won”, we mislead the generation of tomorrow. Those that can cultivate creative thinking, get along with others, AND use emerging technologies effectively are always going to outshine those without those three capabilities.

A more effective narrative should place human-machine teams at the center—because with AI and automation, “workers can win”. As Noah Smith concludes in American workers need lots and lots of robots:

“America’s collective panic about automation is just another manifestation of the backward-looking, hunkered-down, defensive mode we adopted in the wake of the Great Recession and the social disruptions of the 2010s. But the 2010s are over, and the great robot freakout should be left in that decade where it belongs. If we try to fight the future, it will be our own workers who lose out.”

Expanding creative frontiers

“It’s not just a matter of the Lambertians out-explaining us. The whole idea of a creator tears itself apart. A universe with conscious beings either finds itself in the dust… or doesn’t… There never can, and never will be, Gods.” —Maria in Permutation City

“The machines will leap, and the humans will look. They will answer, and we will question. The glory of what they can do will push us closer and closer to the divine. They will do things we never thought possible, and sooner than we think. They will give answers that we ourselves could never have provided. But they will also reveal that our understanding, no matter how great, is always and forever negligible. Our role is not to answer but to question, and to let our questioning run headlong, reckless, into the inarticulate.” —Of Gods and Machines, The Atlantic

Seven thousand subjective years after launch, Maria and Paul awaken in Permutation City to the fact that a complex swarm of “eusocial beings” have evolved from Maria’s original experiments. Known as “The Lambertians”, they quickly discover that their combined intelligence has exceeded that of the entire City. Together, Maria, Paul, and their simulations created new worlds of evolved intelligence of the highest social order.

This piece opened, not with a generative AI script writing for me, but with a reflection from a reading of a 90’s science fiction author who’s known for his foresight into the future. This was intentional. Because, while some of the examples illustrated in this post might seem like science fiction, the rate of progress is stunningly high. As Sequoia’s piece points out:

“... we have gone from narrow language models to code auto-complete in several years—and if we continue along this rate of change and follow a “Large Model Moore’s Law,” then these far-fetched scenarios may just enter the realm of the possible.”

“Those who can imagine anything, can create the impossible,” remarked Alan Turing, as he contemplated the question, “can machines think?” for his seminal 1950 paper that made him infamous for what would be known as the Turing Test. Another brilliant mind, Arthur C. Clarke, also famously said that “technology sufficiently advanced is indistinguishable from magic.” In almost every sense in the 21st century this appears to be true—which means gen AI is pure sorcery.

With large language models now trained with human input, any developer, product manager, entrepreneur, or person with internet access can tap into some of the most powerful and intelligent computation known in history. All one needs to learn is a skill called “prompt engineering”, a bit of coding, and maybe a bit of design (there are gen AI apps for those things, too) to test new product ideas. This has spurred a very recent “Silicon Valley gold rush” to find the applications that generative AI can now power and redefine how work is done. This not only means that humans and machines can co-create together, it means the essential skills of tomorrow are emergent within this context, a few of which might include AI-directed narration, recombination, and generalization.

To revere AI as a potential threat or humans as better, likely stems from self-preservation and other psychological instincts. But even the world’s most well-known behavioural economists, Daniel Kahneman, will tell you that “the robots can’t get here fast enough”. The ultimate footnote of our future history lies in how fast we deploy automation at scale and train our people how to develop their own superpowers with AI.

So, perhaps then, AI is the magic we need—not only to automate our factories, but to also help us solve persisting social challenges our human brains still haven’t figured out. These include problems in education, work, and social systems that are long overdue for transformation. But, first we must realize that we limit ourselves, today’s workers, and our youth when we perpetuate misguided and antagonistic narratives of humans vs AI. Rather than empowering workers’ agency, we fail to equip them with in-demand skills and tools to deal with the very real future of work that is one increasingly of both human and machine.

In other words, the future of work likely resides somewhere between the foresight of Alan Turing and the wisdom of Lady Lovelace. Turing was bullish on AI’s ability to be creative. In contrast, Lovelace believed a machine could only do what its human counterpart instructed it to do. But as I’ve argued, I think our future depends on the emergent relationship between the two—the expansiveness of our creativity and ability to create new worlds depends more and more on the capabilities we develop with our AI partners.

This writing was in part a reflection on the past 5 years since jumping into the intersection of AI and the future of work. As a government analyst in 2017, I remember visceral fears and uncertainty around the impact of AI on jobs and employment. I left government to become a founder because I wanted to shape that fear into new possibilities for the future of work.

I started by asking the question: is the future of work unimaginable? to question those possibilities and have since come a long way in answering it—with gen AI, I am particularly excited because I truly believe the future is anything we might co-imagine it to be, both human and machine.

Much thanks to the human edits and contributions of my friend, Ted Schelble.

I love your posts keep them coming! 🙏😊